MinT

RL training infrastructure for LLMs. You write the loop. We handle the GPUs.

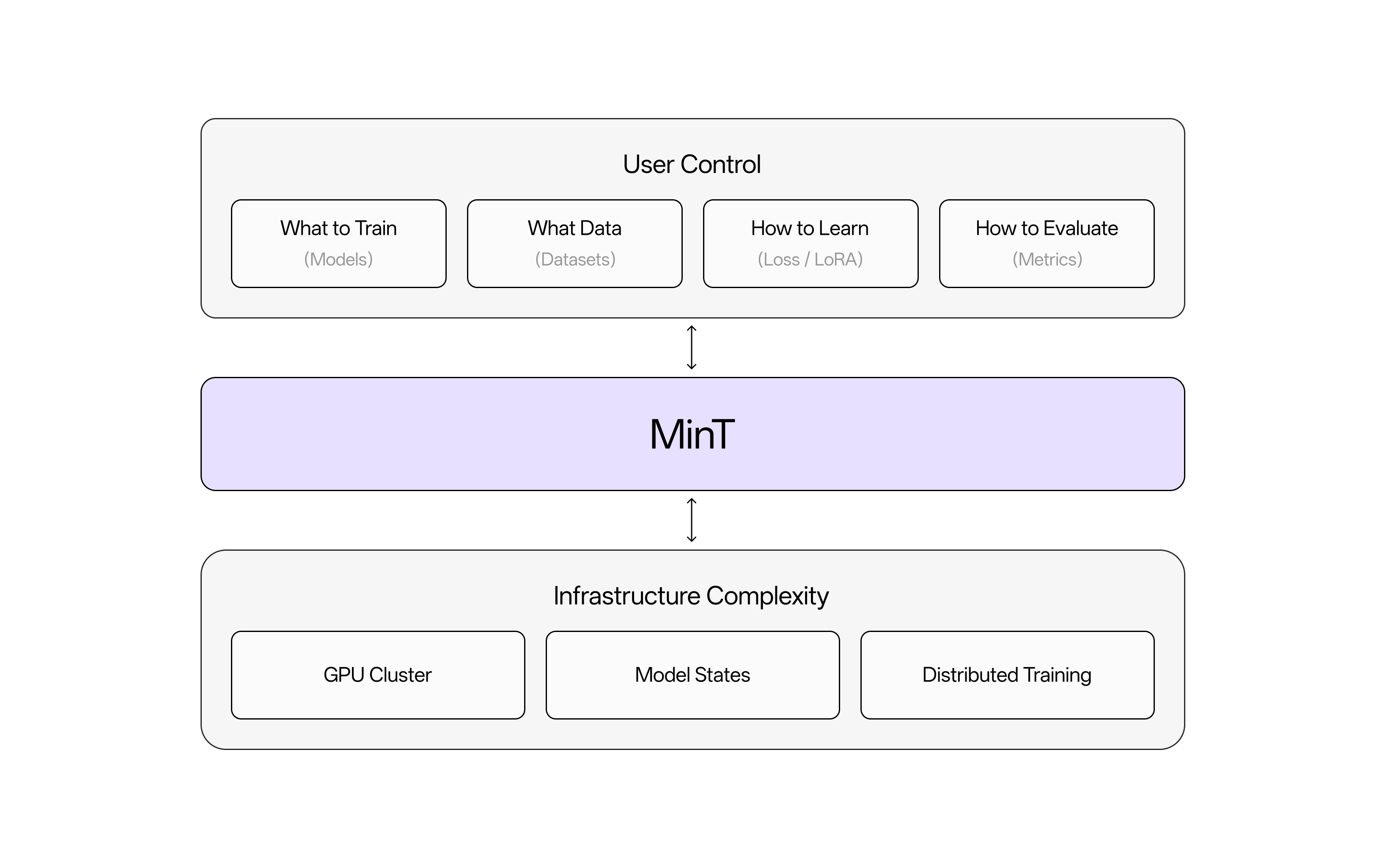

MinT lets you focus on what matters in LLM post-training — your data, your loss functions, and your RL environments — while we handle the heavy lifting of distributed training. You write a simple Python script that runs on your CPU-only machine, including the data or environment and the loss function. We figure out how to run the training on a cluster of GPUs, doing the exact computation you specified, efficiently. To change the model you're working with, you only need to change a single string in your code.

MinT gives you full control over the training loop and all the algorithmic details. It's not a magic black box that makes fine-tuning "easy". It's a clean abstraction that shields you from the complexity of distributed training while preserving your control.

Your code runs on the client side through the MinT SDK. Requests go through an API Gateway to MinT's core services — Model Router, Task Manager, and LoRA Manager — which coordinate distributed training across GPU clusters, object storage, and databases. You only see the client SDK; the server-side complexity is handled for you.

Human Quickstart

Install, set your API key, and run your first training in seven steps.

Prompts for Vibecoders

Feed the full MinT documentation index to Cursor or Claude Code via /llms.txt.

Looking for production-grade MinT? See MinT Enterprise → for managed deployments, dedicated capacity, and tailored case studies in government, finance, and research.